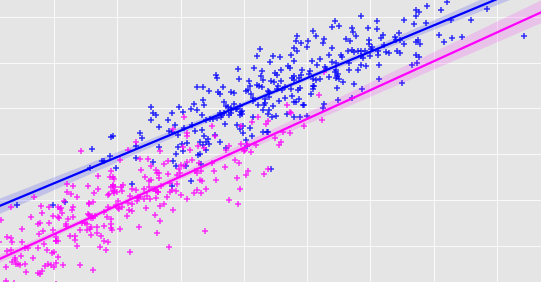

Single Variable Linear Regression. This post describes what cost functions are in Machine Learning as it relates to a linear regression supervised learning algorithm. Multiple linear regression is a bit different than simple linear regression. Experts call it also univariate linear regression where univariate means one variable. First off note that instead of just 1 independent variable we can include as many independent variables as we like.

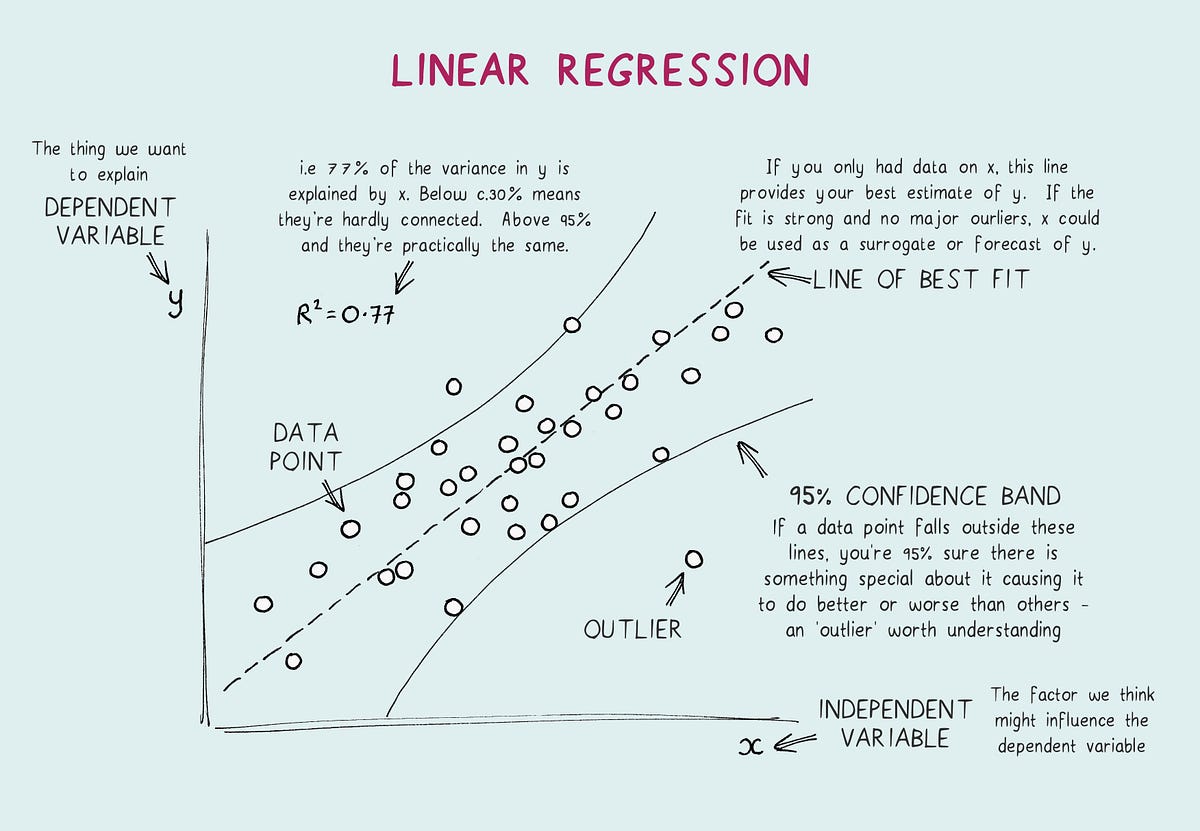

Utf-8 – Author Pawanvir Singh import numpy as np import pandas as pd from matplotlib import pyplot as plt from sklearnlinear_model import LinearRegression from sklearnmetrics import. The line of best fit can then be used to guess how many homicide deaths there would be for ages we dont have data on. The list x y denotes a single generic training example. Few Assumptions Of Linear Regression. The line of best fit can then be used to guess how many homicide deaths there would be for ages we dont have data on. Simple linear regression is a statistical method for obtaining a formula to predict values of one variable from another where there is a causal relationship between the two variables.

Experts call it also univariate linear regression where univariate means one variable.

The interpretation differs as well. Delta 1m X predictions - y. J_history zerosnum_iters 1. Gradient Descent and Cost Function 2825 Gradient Descent and Cost Function Quiz. Single variable linear regression is the tool to find this line of best fit. The interpretation differs as well.