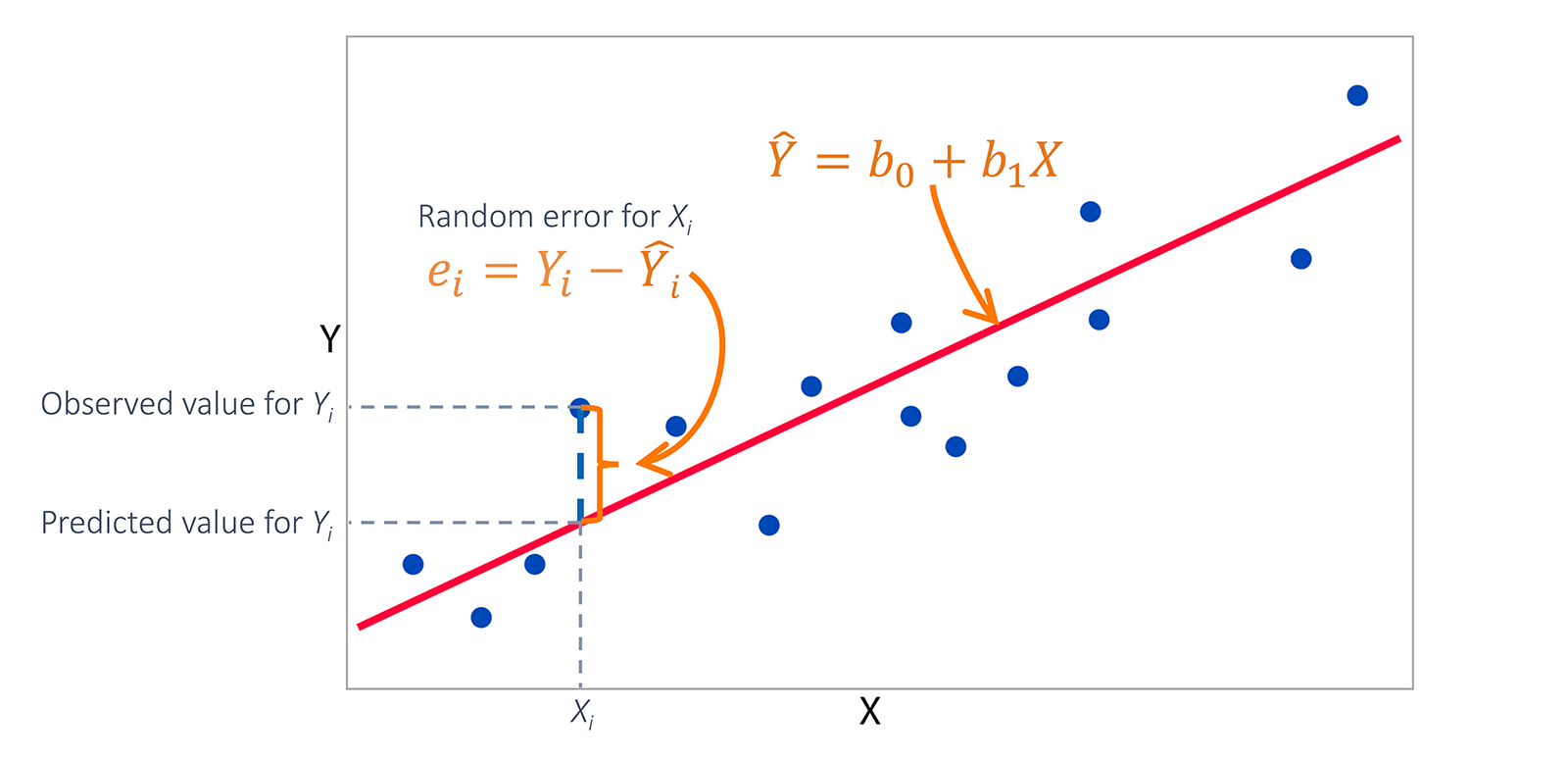

Sum Of The Squared Errors. Sum of Squares Error SSE The sum of squared differences between predicted data points ŷi and observed data points yi. It does this by performing repeated calculations iterations designed to bring the groups segments in tightercloser. Residual sum of squares also known as the sum of squared errors of prediction The residual sum of squares essentially measures the variation of modeling errors. So MSE for each line will be SSE1N SSE2N SSEnN Hence the least sum of squared error is also for the line having minimum MSE.

The sum of the squares errors is a measure of the variance of the measured data from the true mean of the data. Check out the course here. When you have a set of data values it is useful to be able to find how closely related. 2An unbiased estimator of the parameters. Arguably the most common loss function used in statistics and machine learning is the sum of squared of the errors SSE loss function. So many best-fit algorithms use the least sum of squared error methods to find a regression line.

SSE is a measure of sampling error.

SSR Σ ŷi y2 3. 1 The best linear estimator of the parameters. SSE is a measure of sampling error. SSEn denotes Sum of squared error. So MSE for each line will be SSE1N SSE2N SSEnN Hence the least sum of squared error is also for the line having minimum MSE. It does this by performing repeated calculations iterations designed to bring the groups segments in tightercloser.