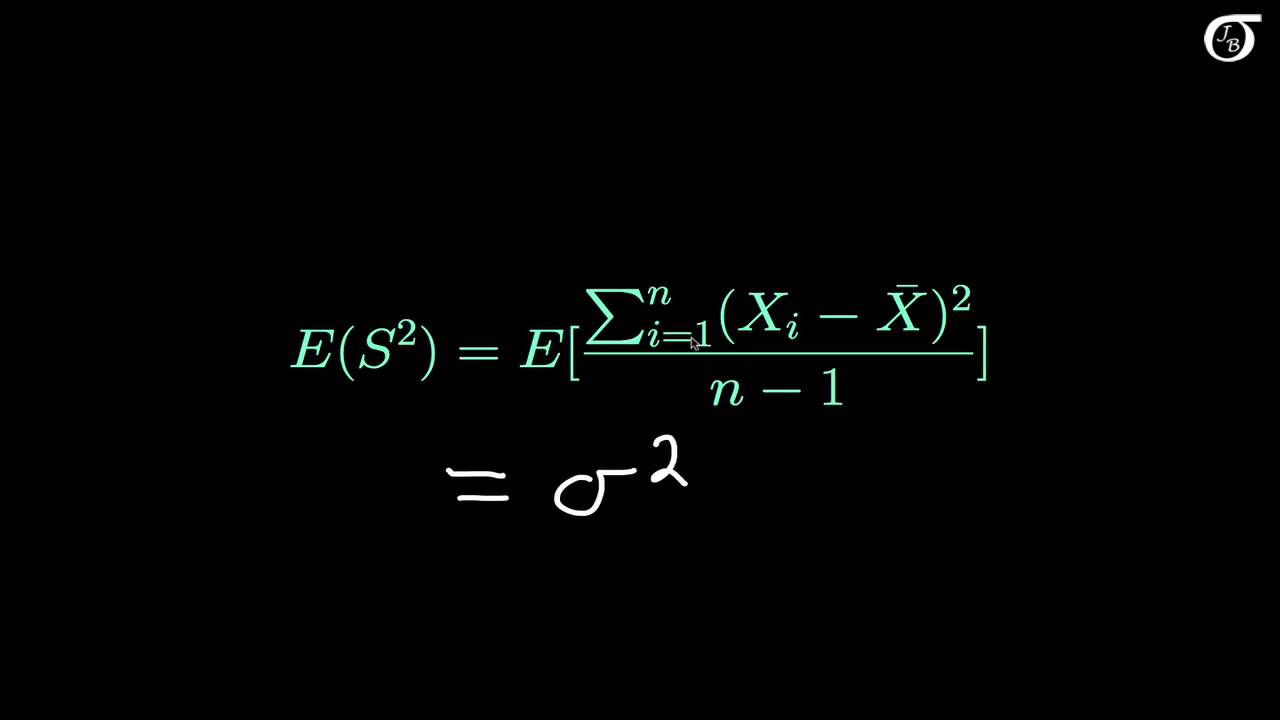

Unbiased Estimate Of Variance. Interpretation of the G-M Theorem. The observed value of the estimator. The estimator for population variance is indeed unbiased. By linearity of expectation σ 2 is an unbiased estimator of σ 2.

In this proof I use the fact that the sampling distribution of the sample mean has a mean of mu and a variance of sigma2n. In other words the higher the information the lower is the possible value of the variance of an unbiased estimator. A proof that the sample variance with n-1 in the denominator is an unbiased estimator of the population varianceIn this proof I use the fact that the samp. Also by the weak law of large numbers σ. Xn i1 EXin nEXin. So the Gauss-Markov Theorem says that the OLS coefficient estimators βj are the best of all linear unbiased estimators of βj where best means minimum variance.

The resulting estimator called the Minimum Variance Unbiased Estimator MVUE have the smallest variance of all possible estimators over all possible values of θ ie Var YbθMV UEY Var YθeY 2 It is important to note that a uniformly minimum variance unbiased estimator may not.

σ 2 1 n k 1 n X k μ 2. A statistic used to approximate a population parameter. The formula for computing variance has n 1 in the denominator. By linearity of expectation σ 2 is an unbiased estimator of σ 2. Proof of Unbiasness of Sample Variance Estimator As I received some remarks about the unnecessary length of this proof I provide shorter version here In different application of statistics or econometrics but also in many other examples it is necessary to estimate the variance of a sample. So the Gauss-Markov Theorem says that the OLS coefficient estimators βj are the best of all linear unbiased estimators of βj where best means minimum variance.